Please remove it if unallowed

I see alot of people in here who get mad at AI generated code and I am wondering why. I wrote a couple of bash scripts with the help of chatGPT and if anything, I think its great.

Now, I obviously didnt tell it to write the entire code by itself. That would be a horrible idea, instead, I would ask it questions along the way and test its output before putting it in my scripts.

I am fairly competent in writing programs. I know how and when to use arrays, loops, functions, conditionals, etc. I just dont know anything about bash’s syntax. Now, I could have used any other languages I knew but chose bash because it made the most sense, that bash is shipped with most linux distros out of the box and one does not have to install another interpreter/compiler for another language. I dont like Bash because of its, dare I say weird syntax but it made the most sense for my purpose so I chose it. Also I have not written anything of this complexity before in Bash, just a bunch of commands in multiple seperate lines so that I dont have to type those one after another. But this one required many rather advanced features. I was not motivated to learn Bash, I just wanted to put my idea into action.

I did start with internet search. But guides I found were lacking. I could not find how to pass values into the function and return from a function easily, or removing trailing slash from directory path or how to loop over array or how to catch errors that occured in previous command or how to seperate letter and number from a string, etc.

That is where chatGPT helped greatly. I would ask chatGPT to write these pieces of code whenever I encountered them, then test its code with various input to see if it works as expected. If not, I would ask it again with what case failed and it would revise the code before I put it in my scripts.

Thanks to chatGPT, someone who has 0 knowledge about bash can write bash easily and quickly that is fairly advanced. I dont think it would take this quick to write what I wrote if I had to do it the old fashioned way, I would eventually write it but it would take far too long. Thanks to chatGPT I can just write all this quickly and forget about it. If I want to learn Bash and am motivated, I would certainly take time to learn it in a nice way.

What do you think? What negative experience do you have with AI chatbots that made you hate them?

That is the general reason, i use llms to help myself with everything including coding too, even though i know why it’s bad

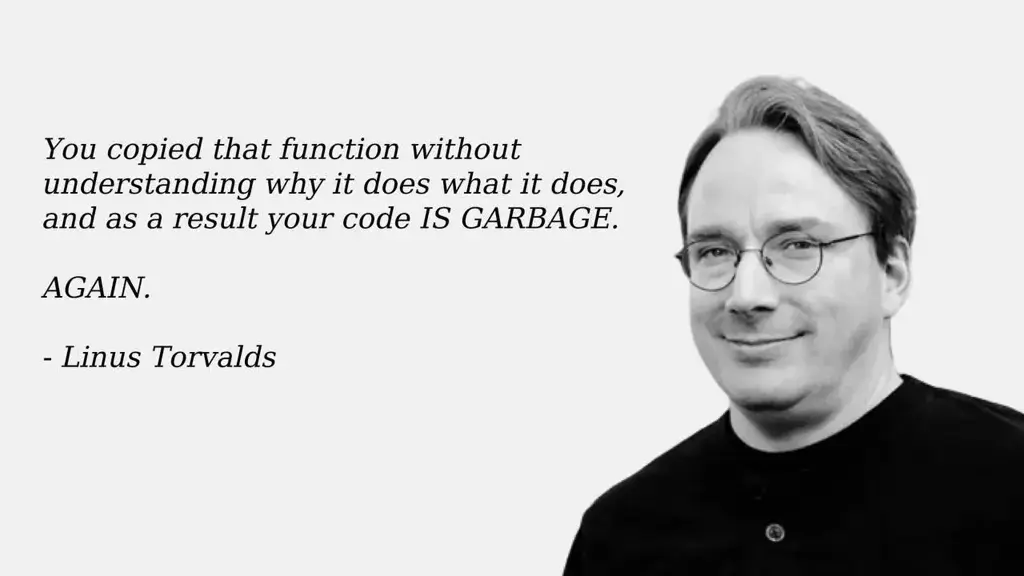

That is the general reason, i use llms to help myself with everything including coding too, even though i know why it’s badI’m fairly sure Linus would disapprove of my "rip everything off of Stack Overflow and ship it " programming style.

And sometimes that’s my only option when interfacing to toehr API.

And we still using it brother

Based Linus strikes again

This is a good quote, but it lives within a context of professional code development.

Everyone in the modern era starts coding by copying functions without understanding what it does, and people go entire careers in all sorts of jobs and industries without understanding things by copying what came before that ‘worked’ without really understanding the underlying mechanisms.

What’s important is having a willingness to learn and putting in the effort to learn. AI code snippets are super useful for learning even when it hallucinates if you test it and make backups first. This all requires responsible IT practices to do safely in a production environment, and thats where corporate management eyeing labor cost reduction loses the plot, thinking AI is a wholesale replacement for a competent human as the tech currently stands.

deleted by creator

There are probably legitimate uses out there for gen AI, but all the money people have such a hard-on for the unethical uses that now it’s impossible for me to hear about AI without an automatic “ugggghhhhh” reaction.

A lot of the criticism comes with AI results being wrong a lot of the time, while sounding convincingly correct. In software, things that appear to be correct but are subtly wrong leads to errors that can be difficult to decipher.

Imagine that your AI was trained on StackOverflow results. It learns from the questions as well as the answers, but the questions will often include snippets of code that just don’t work.

The workflow of using AI resembles something like the relationship between a junior and senior developer. The junior/AI generates code from a spec/prompt, and then the senior/prompter inspects the code for errors. If we remove the junior from the equation to replace with AI, then entry level developer jobs are slashed, and at the same time people aren’t getting the experience required to get to the senior level.

Generally speaking, programmers like to program (many do it just for fun), and many dislike review. AI removes the programming from the equation in favour of review.

Another argument would be that if I generate code that I have to take time to review and figure out what might be wrong with it, it might just be quicker and easier to write it correctly the first time

Business often doesn’t understand these subtleties. There’s a ton of money being shovelled into AI right now. Not only for developing new models, but for marketing AI as a solution to business problems. A greedy executive that’s only looking at the bottom line and doesn’t understand the solution might be eager to implement AI in order to cut jobs. Everyone suffers when jobs are eliminated this way, and the product rarely improves.

Generally speaking, programmers like to program (many do it just for fun), and many dislike review. AI removes the programming from the equation in favour of review.

This really resonated with me and is an excellent point. I’m going to have to remember that one.

A developer who is afraid of peer review is not a developer at all imo, but more or less an artist who fears exposing how the sausage was made.

I’m not saying a junior who is nervous is not a dev, I’m talking about someone who has been at this for some time, and still can’t handle feedback productively.

They’re saying developers dislike having to review other code that’s unfamiliar to them, not having their code reviewed.

As am I, it’s a two way street. You need to review the code, and have it reviewed.

Nowhere did you say this.

I did, and I stand by what I said.

Review is both taken and given. Peer review does not occur in a single direction, it is a conversation with multiple parties. I can understand if someone misunderstood what I meant though.

Your reply refers to a “junior who is nervous” and “how the sausage is made”, which makes no sense in the context of someone who just has to review code

Two reasons:

- my company doesn’t allow it - my boss is worried about our IP getting leaked

- I find them more work than they’re worth - I’m a senior dev, and it would take longer for me to write the prompt than just write the code

I just dont know anything about bash’s syntax

That probably won’t be the last time you write Bash, so do you really want to go through AI every time you need to write a Bash script? Bash syntax is pretty simple, especially if you understand the basic concept that everything is a command (i.e. syntax is

<command> [arguments...]; likeif <condition>where<condition>can be[ <special syntax> ]or[[ <test syntax> ]]), which explains some of the weird corners of the syntax.AI sucks for anything that needs to be maintained. If it’s a one-off, sure, use AI. But if you’re writing a script others on your team will use, it’s worth taking the time to actually understand what it’s doing (instead of just briefly reading through the output). You never know if it’ll fail on another machine if it has a different set of dependencies or something.

What negative experience do you have with AI chatbots that made you hate them?

I just find dealing with them to take more time than just doing the work myself. I’ve done a lot of Bash in my career (>10 years), so I can generally get 90% of the way there by just brain-dumping what I want to do and maybe looking up 1-2 commands. As such, I think it’s worth it for any dev to take the time to learn their tools properly so the next time will be that much faster. If you rely on AI too much, it’ll become a crutch and you’ll be functionally useless w/o it.

I did an interview with a candidate who asked if they could use AI, and we allowed it. They ended up making (and missing) the same mistake twice in the same interview because they didn’t seem to actually understand what the AI output. I’ve messed around with code chatbots, and my experience is that I generally have to spend quite a bit of time to get what I want, and then I still need to modify and debug it. Why would I do that when I can spend the same amount of time and just write the code myself? I’d understand the code better if I did it myself, which would make debugging way easier.

Anyway, I just don’t find it actually helpful. It can feel helpful because it gets you from 0 to a bunch of code really quickly, but that code will probably need quite a bit of modification anyway. I’d rather just DIY and not faff about with AI.

You boss should be more worried about license poisoning when you incorporate code that’s been copied from copyleft projects and presented as “generated”.

Perhaps, but our userbase is so small that we’d be very unlikely that someone would notice. We are essentially B2B with something like a few hundred active users. We do vet our dependencies religiously, but in all actuality, we could probably get away with pulling in some copyleft code.

We built a Durable task workflow engine to manage infrastructure and we asked a new hire to add a small feature to it.

I checked on them later and they expressed they were stuck on an aspect of the change.

I could tell the code was ChatGPT. I asked “you wrote this with ChatGPT didn’t you?” And they asked how I could tell.

I explained that ChatGPT doesn’t have the full context and will send you on tangents like it has here.

I gave them the docs to the engine and to the integration point and said "try using only these and ask me questions if you’re stuck for more than 40min.

They went on to become a very strong contributor and no longer uses ChatGPT or copilot.

I’ve tried it myself and it gives me the wrong answers 90% of the time. It could be useful though. If they changed ChatGPT to find and link you docs it finds relevant I would love it but it never does even when asked.

Phind is better about linking sources. I’ve found that generated code sometimes points me in the right direction, but other times it leads me down a rabbit hole of obsolete syntax or other problems.

Ironically, if you already are familiar with the code then you can easily tell where the LLM went wrong and adapt their generated code.

But I don’t use it much because its almost more trouble than its worth.

If you’re a seasoned developer who’s using it to boilerplate / template something and you’re confident you can go in after it and fix anything wrong with it, it’s fine.

The problem is it’s used often by beginners or people who aren’t experienced in whatever language they’re writing, to the point that they won’t even understand what’s wrong with it.

If you’re trying to learn to code or code in a new language, would you try to learn from somebody who has only half a clue what he’s doing and will confidently tell you things that are objectively wrong? Thats much worse than just learning to do it properly yourself.

I’m a seasoned dev and I was at a launch event when an edge case failure reared its head.

In less than a half an hour after pulling out my laptop to fix it myself, I’d used Cursor + Claude 3.5 Sonnet to:

- Automatically add logging statements to help identify where the issue was occurring

- Told it the issue once identified and had it update with a fix

- Had it remove the logging statements, and pushed the update

I never typed a single line of code and never left the chat box.

My job is increasingly becoming Henry Ford drawing the ‘X’ and not sitting on the assembly line, and I’m all for it.

And this would only have been possible in just the last few months.

We’re already well past the scaffolding stage. That’s old news.

Developing has never been easier or more plain old fun, and it’s getting better literally by the week.

Edit: I agree about junior devs not blindly trusting them though. They don’t yet know where to draw the X.

The other day we were going over some SQL query with a younger colleague and I went “wait, what was the function for the length of a string in SQL Server?”, so he typed the whole question into chatgpt, which replied (extremely slowly) with some unrelated garbage.

I asked him to let me take the keyboard, typed “sql server string length” into google, saw LEN in the except from the first result, and went on to do what I’d wanted to do, while in another tab chatgpt was still spewing nonsense.

LLMs are slower, several orders of magnitude less accurate, and harder to use than existing alternatives, but they’re extremely good at convincing their users that they know what they’re doing and what they’re talking about.

That causes the people using them to blindly copy their useless buggy code (that even if it worked and wasn’t incomplete and full of bugs would be intended to solve a completely different problem, since users are incapable of properly asking what they want and LLMs would produce the wrong code most of the time even if asked properly), wasting everyone’s time and learning nothing.

Not that blindly copying from stack overflow is any better, of course, but stack overflow or reddit answers come with comments and alternative answers that if you read them will go a long way to telling you whether the code you’re copying will work for your particular situation or not.

LLMs give you none of that context, and are fundamentally incapable of doing the reasoning (and learning) that you’d do given different commented answers.

They’ll just very convincingly tell you that their code is right, correct, and adequate to your requirements, and leave it to you (or whoever has to deal with your pull requests) to find out without any hints why it’s not.

This is my big concern…not that people will use LLMs as a useful tool. That’s inevitable. I fear that people will forget how to ask questions and learn for themselves.

Exactly. Maybe you want the number of unicode code points in the string, or perhaps the byte length of the string. It’s unclear what an LLM would give you, but the docs would clearly state what that length is measuring.

Use LLMs to come up with things to look up in the official docs, don’t use it to replace reading docs. As the famous Russian proverb goes: trust, but verify. It’s fine to trust what an LLM says, provided you also go double check what it says in more official docs.

I can feel that frustrated look when someone uses chatGPT for such a tiny reason

Because despite how easy it is to dupe people into thinking your methods are altruistic- AI exists to save money by eradicating jobs.

AI is the enemy. No matter how you frame it.

Removed by mod

I have a coworker who is essentially building a custom program in Sheets using AppScript, and has been using CGPT/Gemini the whole way.

While this person has a basic grasp of the fundamentals, there’s a lot of missing information that gets filled in by the bots. Ultimately after enough fiddling, it will spit out usable code that works how it’s supposed to, but honestly it ends up taking significantly longer to guide the bot into making just the right solution for a given problem. Not to mention the code is just a mess - even though it works there’s no real consistency since it’s built across prompts.

I’m confident that in this case and likely in plenty of other cases like it, the amount of time it takes to learn how to ask the bot the right questions in totality would be better spent just reading the documentation for whatever language is being used. At that point it might be worth it to spit out simple code that can be easily debugged.

Ultimately, it just feels like you’re offloading complexity from one layer to the next, and in so doing quickly acquiring tech debt.

Exactly my experience as well. Using AI will take about the same amount of time as just doing it myself, but at least I’ll understand the code at the end if I do it myself. Even if AI was a little faster to get working code, writing it yourself will pay off in debugging later.

And honestly, I enjoy writing code more than chatting with a bot. So if the time spent is going to be similar, I’m going to lean toward DIY every time.

Lots of good comments here. I think there’s many reasons, but AI in general is being quite hated on. It’s sad to me - pre-GPT I literally researched how AI can be used to help people be more creative and support human workflows, but our pipelines around the AI are lacking right now. As for the hate, here’s a few perspectives:

- Training data is questionable/debatable ethics,

- Amateur programmers don’t build up the same “code muscle memory”,

- It’s being treated as a sole author (generate all of this code for me) instead of like a ping-pong pair programmer,

- The time saved writing code isn’t being used to review and test the code more carefully than it was before,

- The AI is being used for problem solving, where it’s not ideal, as opposed to code-from-spec where it’s much better,

- Non-Local AI is scraping your (often confidential) data,

- Environmental impact of the use of massive remote LLMs,

- Can be used (according to execs, anyways) to replace entry level developers,

- Devs can have too much faith in the output because they have weak code review skills compared to their code writing skills,

- New programmers can bypass their learning and get an unrealistic perspective of their understanding; this one is most egregious to me as a CS professor, where students and new programmers often think the final answer is what’s important and don’t see the skills they strengthen along the way to the answer.

I like coding with local LLMs and asking occasional questions to larger ones, but the code on larger code bases (with these small, local models) is often pretty non-sensical, but improves with the right approach. Provide it documented functions, examples of a strong and consistent code style, write your test cases in advance so you can verify the outputs, use it as an extension of IDE capabilities (like generating repetitive lines) rather than replacing your problem solving.

I think there is a lot of reasons to hate on it, but I think it’s because the reasons to use it effectively are still being figured out.

Some of my academic colleagues still hate IDEs because tab completion, fast compilers, in-line documentation, and automated code linting (to them) means you don’t really need to know anything or follow any good practices, your editor will do it all for you, so you should just use vim or notepad. It’ll take time to adopt and adapt.

People who use LLMs to write code (incorrectly) perceived their code to be more secure than code written by expert humans.

Lol.

We literally had an applicant use AI in an interview, failed the same step twice, and at the end we asked how confident they were in their code and they said “100%” (we were hoping they’d say they want time to write tests). Oh, and my coworker and I each found two different bugs just by reading the code. That candidate didn’t move on to the next round. We’ve had applicants write buggy code, but they at least said they’d want to write some test before they were confident, and they didn’t use AI at all.

I thought that was just a one-off, it’s sad if it’s actually more common.

OP was able to write a bash script that works… on his machine 🤷 that’s far from having to review and send code to production either in FOSS or private development.

I also noticed that they were talking about sending arguments to a custom function? That’s like a day-one lesson if you already program. But this was something they couldn’t find in regular search?

Maybe I misunderstood something.

Exactly. If you understand that functions are just commands, then it’s quite easy to extrapolate how to pass arguments to that function:

function my_func () { echo $1 $2 $3 # prints a b c } my_func a b cOnce you understand that core concept, a lot of Bash makes way more sense. Oh, and most of the syntax I provided above is completely unnecessary, because Bash…

Hmm, I’m having trouble understanding the syntax of your statement.

Is it

(People who use LLMs to write code incorrectly) (perceived their code to be more secure) (than code written by expert humans.)Or is it

(People who use LLMs to write code) (incorrectly perceived their code to be more secure) (than code written by expert humans.)The “statement” was taken from the study.

We conduct the first large-scale user study examining how users interact with an AI Code assistant to solve a variety of security related tasks across different programming languages. Overall, we find that participants who had access to an AI assistant based on OpenAI’s codex-davinci-002 model wrote significantly less secure code than those without access. Additionally, participants with access to an AI assistant were more likely to believe they wrote secure code than those without access to the AI assistant. Furthermore, we find that participants who trusted the AI less and engaged more with the language and format of their prompts (e.g. re-phrasing, adjusting temperature) provided code with fewer security vulnerabilities. Finally, in order to better inform the design of future AI-based Code assistants, we provide an in-depth analysis of participants’ language and interaction behavior, as well as release our user interface as an instrument to conduct similar studies in the future.

My objections:

- It doesn’t adequately indicate “confidence”. It could return “foo” or “!foo” just as easily, and if that’s one term in a nested structure, you could spend hours chasing it.

- So many hallucinations-- inventing methods and fields from nowhere, even in an IDE where they’re tagged and searchable.

Instead of writing the code now, you end up having to review and debug it, which is more work IMO.

Now, I obviously didnt tell it to write the entire code by itself. […]

I am fairly competent in writing programs.

Go ahead using it. You are safe.

As a cybersecurity guy, it’s things like this study, which said:

Overall, we find that participants who had access to an AI assistant based on OpenAI’s codex-davinci-002 model wrote significantly less secure code than those without access. Additionally, participants with access to an AI assistant were more likely to believe they wrote secure code than those without access to the AI assistant.

FWIW, at this point, that study would be horribly outdated. It was done in 2022, which means it probably took place in early 2022 or 2021. The models used for coding have come a long way since then, the study would essentially have to be redone on current models to see if that’s still the case.

The people’s perceptions have probably not changed, but if the code is actually insecure would need to be reassessed

I think it’s more appalling because they should have assumed this was early tech and therefore less trustworthy. If anything, I’d expect more people to believe their code is secure today using AI than back in 2021/2022 because the tech is that much more mature.

I’m guessing an LLM will make a lot of noob mistakes, especially in languages like C(++) where a lot of care needs to be taken for memory safety. LLMs don’t understand code, they just look at a lot of samples of existing code, and a lot of code available on the internet is terrible from a security and performance perspective. If you’re writing it yourself, hopefully you’ve been through enough code reviews to catch the more common mistakes.