LOL from the comments

[…] “people having unreasonably high expectations for epistemics in published work” is definitely a cost of dealing with EAs!

It is funny as in my exp this unreasonably high expectations for epistemics only applies to things they disagree with, see for example the LW guy used a IQ and education list published by a tabloid (which the tabloid said it had from a different source, but it wasn’t linked). Vs saying something they disagree with which requires you to not only produce the scientific article you got it from, but also the specific paragraph from the article, and a list of steelmanned counterarguments.

Putting the EA in sealion

Bayes’ theorem, baby!

I was really confused about the article until I figured out EA isn’t Electronic Arts.

It stands for Effective Altruism, which isn’t about either of those things.

Which also isn’t about either of those things.

Lucky you. The infection has not taken hold, you can still save yourself. Run!

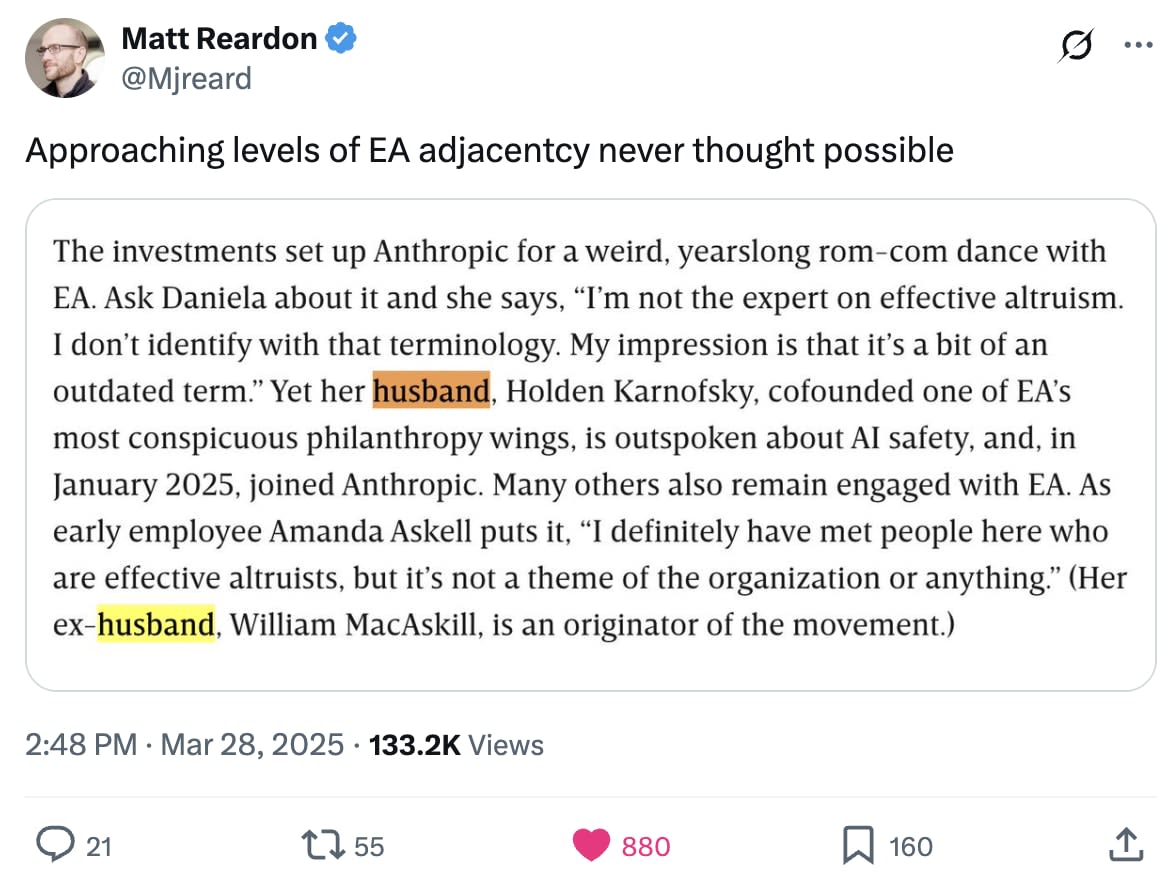

This commenter may be saying something we already knew, but it’s nice to have the confirmation that Anthropic is chock full of EAs:

(I work at Anthropic, though I don’t claim any particular insight into the views of the cofounders. For my part I’ll say that I identify as an EA, know many other employees who do, get enormous amounts of value from the EA community, and think Anthropic is vastly more EA-flavored than almost any other large company, though it is vastly less EA-flavored than, like, actual EA orgs. I think the quotes in the paragraph of the Wired article give a pretty misleading picture of Anthropic when taken in isolation and I wouldn’t personally have said them, but I think “a journalist goes through your public statements looking for the most damning or hypocritical things you’ve ever said out of context” is an incredibly tricky situation to come out of looking good and many of the comments here seem a bit uncharitable given that.)

“a journalist goes through your public statements looking for the most damning or hypocritical things you’ve ever said out of context” is an incredibly tricky situation to come out of looking good

only if, you know, your public statements contain lots of damning things

If you give me six thousand tweets written by the hand of the most damned and hypocritical of men, I will find something in them which will cancel him. —@RichelieuArmand, probably

… the EAristocrats!