- cross-posted to:

- [email protected]

- [email protected]

- cross-posted to:

- [email protected]

- [email protected]

This write-up is really, really good. I think about these concepts whenever people discuss astrophotography or other computation-heavy photography as being fake software generated images, when the reality of translating the sensor data with a graphical representation for the human eye (and all the quirks of human vision, especially around brightness and color) needs conscious decisions on how those charges or voltages on a sensor should be translated into a pixel on digital file.

Added https://maurycyz.com/index.xml to my feed reader!

#nofilter

Not sure how worth mentioning it is considering how good the overall write up is.

Even though the human visual system has a non-linear perception of luminance. The camera data needs to be adjusted because the display has a non-linear response. The camera data is adjusted to make sure it appears linear to the displays face. So it’s display non-uniform response that is being corrected, not the human visual system.

There is a bunch more that can be done and described.

Good post! Always nice to see actual technology on this sub.

I think the penultimate photo looks better than the final one that has the luminance and stuff balanced, but maybe that’s just me.

Good read. Funny how I always thought the sensor read rgb, instead of simple light levels in a filter pattern.

You could see the little 2x2 blocks as a pixel and call it RGGB. It’s done like this because our eyes are so much more sensitive to the middle wavelengths, our red and blue cones can detect some green too. So those details are much more important.

A similar thing is done in jpeg, the green channel always has the most information.

For a while the best/fanciest digital cameras had three CCDs, one for each RGB color channel. I’m not sure if that’s still the case or if the color filter process is now good enough to replace it.

3chip cmos sensors are about 20-25 years out of date technology. Mosaic pattern sensors have eclipsed them on most imaging metrics.

There are some sensors that have each color stacked vertically instead of using a Bayer filter. Don’t think they’re popular because the low light performance is worse.

This was sold by Foveon, which had some interesting differences. The sensors were layered which, among other things, meant that the optical effect of moire patterns didn’t occur on them.

At least for astronomy, you just have one sensor (they’re all CMOS nowadays) and rotate out the RGB filters in front of it.

Is that the case for big ground and space telescopes too? I can imagine this could cause wobbling.

Btw is that also how infrared and x-ray telescopes work as well?

It sure is! The monochrome sensors are also great for narrowband imaging, where the filters let through one specific wavelength of light (like hydrogen alpha) which lets you do false color imaging.

IR is basically the same. Here’s the page on JWST’s filters. No clue about xray scopes, but IIRC they don’t use any kind of traditional CMOS or CCD sensor.

wild how far technology has marched on and yet we’re still essentially using the same basic idea behind technicolor. but hey, if it works!

This is why I don’t say I need to edit my photos, but instead I need to process them. Editing is clearly understood by the layperson as Photoshop and while they don’t understand processing necessarily, many people still understand taking photos to a store and getting them processed from the film to a photo they can give someone.

How do you get a sensor data image from a camera?

RAW files. Even then, you mostly see the processed result based on whatever processing your raw image viewer/editor does. But if you know how to get to it and use it, the same raw sensor capture data is there

Most cameras will let you export raw files, and a lot of phones do as well(although the phone ones aren’t great since they usually do a lot of processing on it before giving you the normal picture)

My understanding is that really raw phone data also have a lot of lens distortion, and proprietary code written by the camera brand has specific algorithms to undo that effect. And this is the part that phone tinkerers complain is not open source (well, it does lots of other things to the camera too).

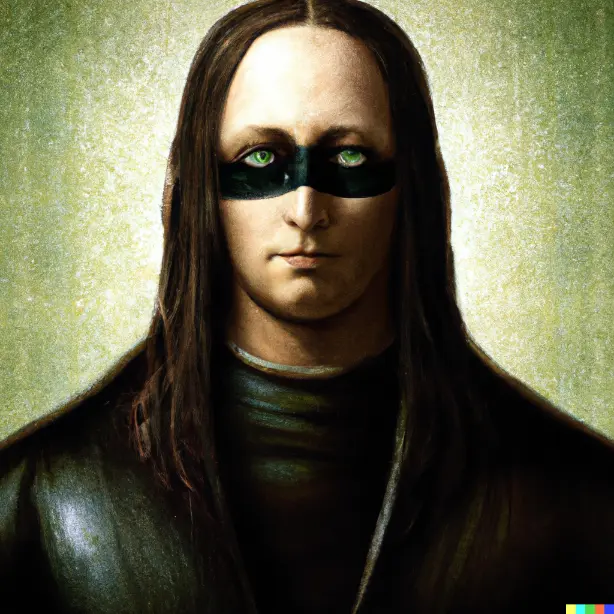

Fake dear head

I’m a little confused on how the demosaicing step produced a green-tinted photo. I understand that there are 2x green pixels, but does the naive demosaic process just show the averaged sensor data which would intrinsically have “too much” green, or was there an error with the demosaicing?

Yes, given the comment about averaging with the neighbours green will be overrepresented in the average. An additional (smaller) factor is that the colour filters aren’t perfect, and green in particular often has some signficant sensitivity to wavelengths that the red and blue colour filters are meant to pick up.

edit: One other factor I forgot, green photosites are often more sensitive than the red and blue photosites.

Plus human eye is more sensitive to green than other channels

Green is not a creative colour.

Is this you? If so, my wife wonders what camera and software you used!

This info may still be present in the files, download them and inspect with any software that displays that kinda info. I’m not proficient in that, I am just a nerd that has done it a decade ago when I was into photography.

That’s crazy.