Edit: Clickbait…

I don’t even need to read the article to know this headline is 100% bullshit.

Doubt.

oh no, explain this then https://www.top500.org/lists/top500/list/2023/11/

The scheduler is limited but it can still schedule across all the threads and cores in a given system. It’s just doing it less efficiently. The headline is misleading.

The article itself isn’t very clear. It took me a while to figure out the scheduler algorithms were affected rather than the kernel as a whole.

It’s good then that we have choice of schedulers :)

Supercomputers are usually just a lot of smaller computers that happen to be connected with very efficient networking. Then you use something like MPI to simulate a big pool of shared memory.

Different kernel?

Pure misinformation.

https://news.ycombinator.com/item?id=38260935

tldr it’s a clickbait title.

I have already seen this horrible headline posted (and deleted) from THIS community earlier today.

Me: So Linux has had this problem since the late 90s?

Brain: Try that again old man.

Could someone please summarize this in simple terms?

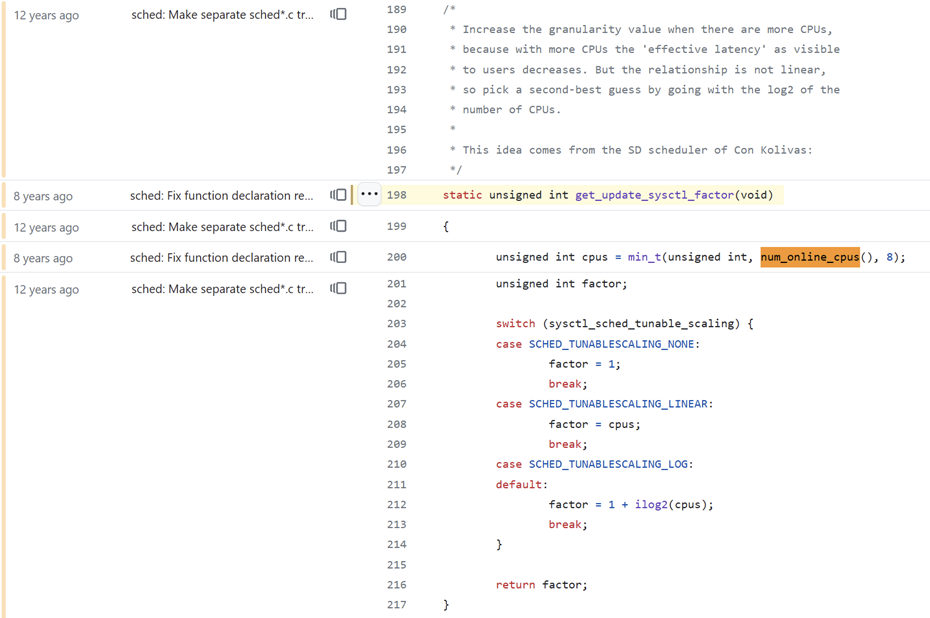

The task scheduler in the Linux kernel was using a max value of 8 in its calculations rather than the actual number of cores in the machine.

The machine could have 128 cores, but the scheduler would base its calculations on 8 cores. As a result, the processes on the machine would run for less time than they should.

Damn, I hope it’s, like the other commenter said, wrong.

Claim is wrong, disregard.

Thank you

This reminds me of code I’ve written in the past and reviewed years later: At first glance it looks like it is wrong. Especially if magic numbers are involved. Then I start to think about it (hopefully with some hints in the comments 😉) and remember soon that I spent a lot of time thinking about this specific line back then and wrote it fully intentional to limit the effect of variables in my calculations 😁

Huge if true.