just think: an AI trained on depressed social justice queers

wonder what Hive is making of Bluesky

“you took a perfectly good pocket calculator and gave it anxiety”

Well that sucks. I’ve always had a lingering respect for Mullenweg because I think Wordpress.com is a good service, I really like Simplenote, and I thought that Automattic taking over Tumblr could not make stuff worse after the service being gelded by the porn-haters.

But his latest antics on moderation at Tumblr and now this (although he’s hardly alone, Reddit is also gonna sell its user’s contents to the AI mills) really showed his true face.

(I’ll never forget laughing at him for losing tens of thousands worth of Leica gear in a lost/stolen luggage incident many years back)

tens of thousands worth of Leica gear

of course he’s one of those

Well his tag is “photomatt” and AFAIK he was pretty involved in digital photography when that was the cool thing to be doing.

I found the post, from 2008: https://ma.tt/2008/05/dont-check-your-valuables/

All very expensive at the time, especially the Noctilux!

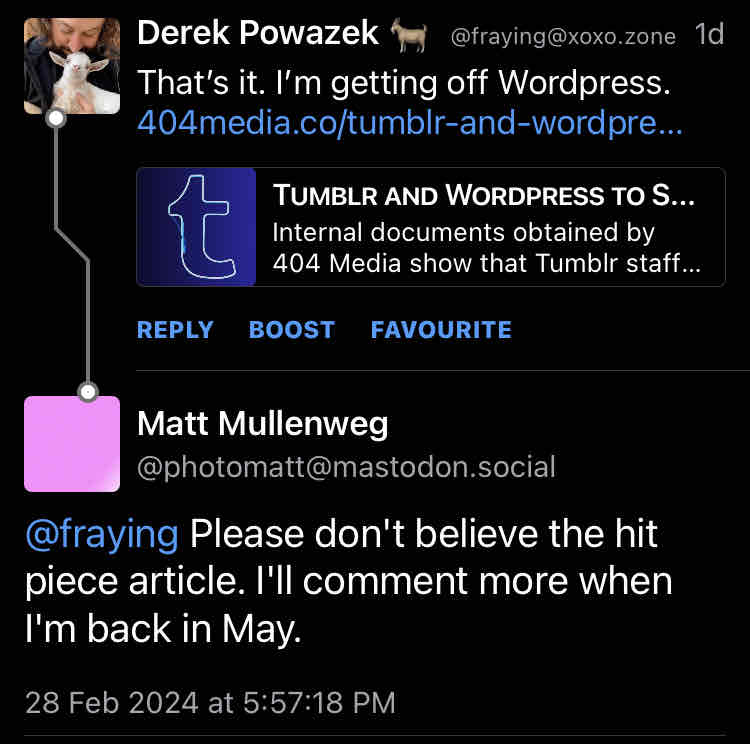

The sign of a great CEO is that sense of urgency.

“We’re facing the biggest PR storm in our history, no hurry”.

Where is he, btw? Rehab?

Probably on a photography trip to some exotic and expensive place.

that’s the fun part. He’s been on “samattical” since beginning of Feb. Plenty of time for twitter harassment but this one will have to wait

Banning trans women on Tumblr who are mad at him for them being harassed through the platform: Important.

Feuding with said trans women on Twitter: Important.

Explaining their decision to sell the users data: Not important.

And to add, admitting that Tumblr had an admin who charged for banning trans women: Important.

Explaining wtf that was all about: Not important.

He probably should be in rehab.

We can’t have nice things.

The full text describes clusterfuck after clusterfuck. It’s worth registering (it’s free to read) even just for this one.

no i don’t have an unpaywalled copy, but it’s 404 so i’ll assume the article delivers on the headline and intro paras

404 is the first time I’ve ever felt like a news source (that isn’t a journalist I know personally) has been consistently high quality enough to pay for it

If AI is trained on some subset of human interactions and subjects, lets call it set A, and someone uses the AI to learn about a subset of human interactions and subjects, lets call it set B, then there necessarily must be some shared set C of subjects and interactions contained within both A and B. In some cases information may literally be mirrored or it may simply be memes or ideas that pop up over and over again. Note, I am talking about perspectives on things, meta data if you will about those things contained within the sets A and B, just as much as I am talking about the specific things themselves in A and B.

The relative size of set C can be thought of as a practical measure of the magnitude difference between pattern matching and knowledge in a given context. AI design seems to treat set C as always trivial in size compared to set A or set B, and does not seem concerned with the possible cross-talk effects that arise from set A and set B not truly being linearly independent. Even worse, the cross-talk that happens creates an invisible distortion that degrades the usefulness of the AI, but that cannot be fundamentally distinguished (through inspection of the AI alone and not the data sets) from the correctly functioning aspects of the AI.

The larger set C becomes, the exponentially quicker the collective wisdom of human conversations online is strip mined and obscured behind machine generated fluff.

Everyone wants to talk about AI from the angle of the genius computer programmer making an intelligent machine because that is sexy, but what these “AI” really represent are expressions of the power of good data sets and the priceless value of human beings who methodically contribute high quality content to those data sets. In other words, AI and LLMs are about humans intelligently structuring set A and set B so that set C isn’t a problem. AI is not some magic thing that only needs humans to be trained on quality data sets to get started, rather AI is an expression of how powerful our collective conversations and creations are when we create structures out of them that computers can interface with.

Sillicon Valley and the 1% are trying to convince us that the collective power of the crowd is actually something they own by slapping “AI” on it and calling it a day but even with the basic argument I made above (excluding the fact that AI just also hallucinates shit) there is just no way that AI in its current form along with simultaneous divestment and devaluing of the systems that created the high quality training data sets in the first place is long term sustainable. The sensemaking of AI HAS to collapse in on itself along any meaningful metric if things keep heading in this direction and the whiplash that is going to cause will hurt a lot of humans.

definitely one of the longest ways to say “they’re thieving little shits trying to sell things back to us after slapping a lick of paint on it” I’ve seen in a long while, unfortunately there’s no achievement badge for that tho

this reads like taking the promises made by many different technologies I’ve read weird promises from and then extrapolating from the promises as if they were a description of reality

(academic blockchain theorising has often been of this genre for example)

Great, now LLMs are gonna format all their output as email-indented reblog chains.