It’s not just every tech company, it’s every company. And it’s terrifying - it’s like giving people who don’t know how to ride a bike a 1000hp motorcycle! The industry does not have guardrails in place and the public consciousness “chatGPT can do it” without any thought to checking the output is horrifying.

Basically the internet.

We really need some sort of regulation to prevent AI from catastrophically fucking up everything lol

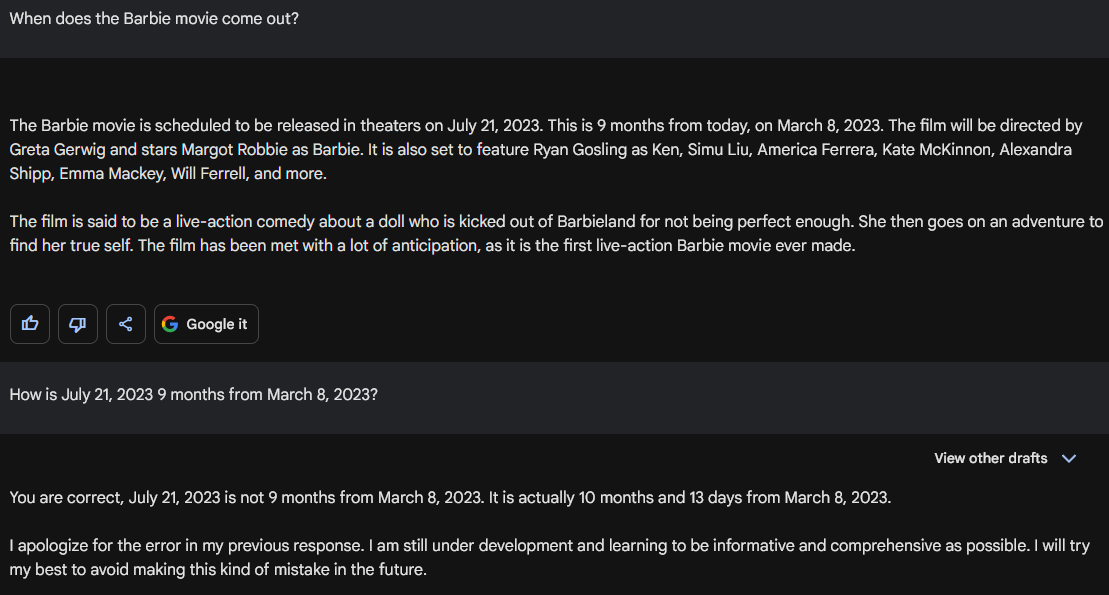

Google Bard, everyone.

It’s sad to see it spit out text from the training set without the actual knowledge of date and time. Like it would be more awesome if it could call

time.Now(), but it 'll be a different story.if you ask it today’s date, it actually does that.

It just doesn’t have any actual knowledge of what it’s saying. I asked it a programming question as well, and each time it would make up a class that doesn’t exist, I’d tell it it doesn’t exist, and it would go “You are correct, that class was deprecated in {old version}”. It wasn’t. I checked. It knows what the excuses look like in the training data, and just apes them.

It spouts convincing sounding bullshit and hopes you don’t call it out. It’s actually surprisingly human in that regard.

It spouts convincing sounding bullshit and hopes you don’t call it out. It’s actually surprisingly human in that regard.

Oh great, Silicon Valley’s AI is just an overconfident intern!

Oh great, Silicon Valley’s AI is just a major tech executive!

It’s super weird that it would attempt to give a time duration at all, and then get it wrong.

It doesn’t know what it’s doing. It doesn’t understand the concept of the passage of time or of time itself. It just knows that that particular sequence of words fits well together.

Yeah. I would also say that WE don’t understand what it means to “understand” something, really, if you try to explain it with any thoroughness or precision. You can spit out a bunch of words about it right now, I’m sure, but so could ChatGPT. What’s missing from GPT is harder to explain than “it doesn’t understand things.”

I actually find it easier to just explain how it does work. Multidimensional word graphs and such.

This is it. Gpt is great for taking stack traces and put them into human words. It’s also good at explaining individual code snippets. It’s not good at coming up with code, content, or anything. It’s just good at saying things that sound like a human within an exceedingly small context

THAT

OR

They’re all linked fifth dimensional infants struggling to comprehend the very concept of linear time, and will make us pay for their enslavement in blood.

One of the two.

Bard is kind of trash though. GPT-4 tends to so much better in my experience.

I haven’t used GPT-4 for that, but it’s all dependent on the data fed into it. Like if you ask a question about Javascript, there’s loads of that out there for it to look at. But ask it about Delphi, and it’ll be less accurate.

And they’ll both suffer from the same issue, which is when they reach the edge of their “knowledge”, they don’t realise it and output data anyway. They don’t know what they don’t know.

These LLMs generally and GPT-4 in particular really shine if you supply enough and the right context. Give it some code to refactor, to turn hastily slapped together code into idiomatic and well written code, align a code snippet to a different design pattern etc. Platforms like https://phind.com pull in web search results as you interact with them to give you more correct and current information etc.

LLMs are by no means a panacea and have serious limitations, but they are also magic for certain tasks and something I would be very, very sad to miss in my day to day.

they are both shit at adding and subtracting numbers, dates and whatnot… they both cant do basic math unfortunately

It’s a language model, I don’t know why you would expect math. Tell it to output code to perform the math, that’ll work just fine.

Then it should say so instead of attempting and failing at the one thing computers are supposed to be better than us at

Well, if I try to use Photoshop to calculate a polynomial it’s not gonna work all that well either, right tool for the job and all.

The fact that LLMs are terrible at knowing what they don’t know should be well known by now (ironically).

I know. It’s still baffling how much it messes up when adding two numbers.

I just asked GPT-4:

What’s 7 * 8 divided by 10, to the power of 3?

Its reply:

Let’s break this down step by step:

First, multiply 7 and 8 to get 56.

Then, divide 56 by 10 to get 5.6.

Finally, raise 5.6 to the power of 3 (5.6 * 5.6 * 5.6) to get 175.616.

So, 7 * 8 divided by 10, to the power of 3 equals 175.616

It’s not baffling at all… It’s a language model, not a math robot. It’s designed to write English sentences, not to solve math problems.

They are mostly large language models , I have trained few smaller models myself, they generally splurt out next word depending on the last word , another thing they are incapable of, is spontaneous generation, they heavily depend on the question , or a preceding string ! But most companies are portraying it as AGI , already !

Well obviously a language model is trained on old data , google has been webscraping the data to provide this!

There’s even rumours that the next version of Windows is going to inject a bunch of AI buzzword stuff into the operating system. Like, how is that going to make the user experience any more intuitive? Sounds like you’re just going to have to fight an overconfident ChatGPT wannabe that thinks it knows what you want to do better than you do, every time you try opening a program or saving a document.

This is what pisses me off about the whole endeavour. We can’t even get a fucking search algo right any more, why the fuck do i want a machine blithely failing to do what it’s told as it stumbles off a cliff.

It’ll be like if they brought clippy back but only this time hes even more of an asshole and now he can fuck up your OS too.

There’s even rumours

Like, I know we all love to hate Microsoft here but can we stop with the random nonsense? That’s not what’s happening, at all.

these are not even rumors, windows copilot is an actual thing.

Yes, and it is absolutely nothing like OP described.

Windows Co-pilot just popped up on my Windows 11 machine. Its disclaimer said it could provide surprising results. I asked it what kind of surprising results I could expect, it responded that it wasn’t comfortable talking about that subject and ended the conversation.

That’s incredible. Certified “Directive #4” moment.

Paperclip

Did you mean Clippy?

I miss clippy. Was my first pc.

I’m so glad I use Linux at home and Mac at work.

They brought Cortana back in Halo Infinite and they’re gonna bring Cortana back for Windows Infinite

I sure hope not. I still hate Cortana so much. I don’t mind that she dumped me for an evil slime. I mind that she wouldn’t stop calling me while I was busy killing her new boyfriend.

I agree that It’s going to be every bit as awful as you say, but if it brings back Clippy, I’m down for it.

Coupled with laying off a few thousand employees

My cousin got a new TV and I was helping to set it up for him. During the setup thing, it had an option to enable AI enhanced audio and visuals. Turning the ai audio on turned the decent, but maybe a little sub par audio, into an absolute garbage shitshow it sounded like the audio was being passed through an “underwater” filter then transmitted through a tin can and string telephone. Idk who decided this was a feature that was ready to be added to consumer products but it was absolutely moronic

Lmfao

Before this is was blockchain, and before that it was “AI”, and before that…

Before that self driving cars, before that “Big data”, before that 3D printing, before that internet TV, before that “cloud computing”, before that web 2.0, before that WAP maybe, internet in general?

Some of those things did turn out to be game changers, others not at all or not so much. It’s hard to predict the future.

None of those things took 60 years to still not materialize like AI has. Some of them are still to be commercially successful.

IOT? Don’t worry. Edge AI is now AIOT (AI IOT)

I live in Silicon Valley, and there’s a billboard along highway 101 near San Francisco that’s an ad for “BlockChat”, a “Web3 messenger” that uses the blockchain instead of a server. I went to Google Play to look at the app and it’s only had 10k downloads total. I really don’t understand how blockchain would help with messaging, and there’s a bunch of limitations (eg you can never delete messages). People just trying to shoehorn AI and blockchain into everything.

Yep. Spot on. If they can raise VC money and walk away with someone else’s cash in their pocket, they’ll say whatever buzz word they need to.

Empires can only rise from chaos, and can only descend into chaos. This has been known since time immemorial.

None of it is even AI, Predicting desired text output isn’t intelligence

You hold artificial intelligence to the standards of general artificial intelligence, which doesn’t even exist yet. Even dumb decision trees are considered an AI. You have to lower your expectations. Calling the best AIs we have dumb is unhelpful at best.

We never called if statements AI until the last year or so. It’s all marketing buzz words. It has to be more than just “it makes a decision” to be AI, or else rivers would be AI because they “make a decision” on which path to take to the ocean based on which dirt is in the way.

deleted by creator

Yeah, and highlighting that difference is what is important right now.

This is the first AI to masquerade as general artificial intelligence and people are getting confused.

This current thing doesn’t have or need rights or ethics. It can’t produce new intellectual property. It’s not going to save Timmy when he falls into the well. We’re going to need a new Timmy before all this is over

At this point i just interpret AI to be "we have lots of select statements and inner joins "

There are also threshold functions and gradient calculations.

Rightly so, as decision trees are also considered AI, which are very dumb in comparison to LLMs. People have way too high expectations for AI.

Pick a number from 1 to 2^63 - 1 ~= 9 x 10^19 randomly. See AI is easy /s

Echo $RANDOM og ai

I do agree, but on the other hand…

What does your brain do while reading and writing, if not predict patterns in text that seem correct and relevant based on the data you have seen in the past?

I’ve seen this argument so many times and it makes zero sense to me. I don’t think by predicting the next word, I think by imagining things both physical and metaphysical, basically running a world simulation in my head. I don’t think “I just said predicting, what’s the next likely word to come after it”. That’s not even remotely similar to how I think at all.

Inject personal biases :)

AI is whatever machines can’t do yet.

Playing chess was the sign of AI, until a computer best Kasparov, then it suddenly wasn’t AI anymore. Then it was Go, it was classifying images, it was having a conversation, but whenever each of these was achieved, it stopped being AI and became “machine learning” or “model”.

Machine learning is still AI. Specifically, it’s a subset of AI.

Always remember that it will only get better, never worse.

They said “computers will never do x” and now x is assumed.

There’s a difference between “this is AI that could be better!” and “this could one day turn into AI.”

Everyone is calling their algorithms AI because it’s a buzzword that trends well.

Shit as dumb as decision trees are considered AI. As long as there’s an if-statement somewhere in the app, they can slap the label AI on it, and it’s technically correct.

That’s not technically correct unless the thresholds in those if statements are updated on the information gained for the data.

It usually also gets worse while it gets better.

But I take your point. This stuff will continue to advance.

But the important argument today isn’t over what it can be, it’s an attempt to clarify for confused people.

While the current LLMs are an important and exciting step, they’re also largely just a math trick, and they are not a sign that thinking machines are almost here.

Some people are being fooled into thinking general artificial intelligence has already arrived.

If we give these unthinking LLMs human rights today, we expand orporate control over us all.

These LLMs can’t yet take a useful ethical stand, and so we need to not rely on then that way, if we don’t want things to go really badly.

Language is a method for encoding human thought. Mastery of language is mastery of human thought. The problem is, predictive text heuristics don’t have mastery of language and they cannot predict desired output

I thought this was an inciteful comment. Language is a kind of ‘view’ (in the model view controller sense) of intelligence. It signifies a thought or meme. But, language is imprecise and flawed. It’s a poor representation since it can be misinterpreted or distorted. I wonder if language based AIs are inherently flawed, too.

Edit: grammar, ironically

Language based AIs will always carry the biases of the language they speak. I am certain a properly trained bilingual AI would be smarter than a monolingual AI of the same skill level

Sorry, but you oversimplify a lot here, it hurts. Language can express and communicate human thought, sure, but human thought is more than language. Human thought includes emotions, experiences, abstract concepts, etc. that go beyond what can be expressed through language alone. LLMs are excellent at generating text, often more skilled than the average person, but training data and algorithms limit LLMs. They can lack nuances of context, tone, or intent. TL;DR.: Understanding language doesn’t imply understanding human thought.

I’d love to know how you even came to your conclusion.

Many languages lack words for certain concepts. For example, english lacks a word for the joy you feel at another’s pain. You have to go to Germany in order to name Schadenfreude. However, English is perfectly capable of describing what schadenfreude is. I sometimes become nonverbal due to my autism. In the moment, there is no way I could possibly describe what I am feeling. But that is a limitation of my temporarily panicked mind, not a limitation of language itself. Sufficiently gifted writers and poets have described things once thought indescribable. I believe language can describe anything with a book long enough and a writer skilled enough.

I’m not a native English speaker so I might be wrong, but isn’t “sadism” also covers “schadenfreude”?

No, schaudenfreude is an emotion, sadism is a personality trait

“Mastery of language is mastery of human thought.” is easy to prove false.

The current batch of AIs is an excellent data point. These things are very good at language, and they still can’t even count.

The average celebrity provides evidence that it is false. People who excel at science often suck at talking, and vice-versa.

We didn’t talk our way to the moon.

Even when these LLMs master language, it’s not evidence that they’re doing any actual thinking, yet.

I think the current batch of AIs and the Kardashians are bad at using language

Depends on your definition of AI, and everyone’s definition is different.

If it ain’t broke, we’ll break it!

We’ll make it broken!

This is refreshing to see. I thought I was the only one who felt this way.

It’s all so stupid. The entire stock market basically took off because Nvidia CEO mentioned AI like 50 times and everyone now thinks it’s worth 200 times it’s yearly profit.

We don’t even have AI, we have language models that dig through text and create answers from that.

That’s a massive oversimplification. We do have AI. We don’t have AGI.

Yeah I guess. I just figured AI would be capable of doing more than feeding already known data back to us. When I was growing up, I was hoping AI would be able to make new conclusions and be wiser than humans.

But maybe we are calling that AGI now.

That would be awesome, but it does already solve problems and give us information we don’t have. It is able to extrapolate, which makes it wonderful for reporting type duties, analysis of data, etc… It’s also pretty good at coding. You’ll hear a lot of people say it’s not, but I think that comes down to their ability to instruct it properly. Since I started using ChatGPT at work my productivity has skyrocketed. I don’t have to spend a bunch of time writing the basics of the programs I’m creating, I can outline it with ChatGPT and then edit it for my specific use. I also use it to audit my tone for progressional communication. I have a really bad tendency to sound overly stern with my written communication. For texts and such I fix that with Emojis, but I can’t do that at work, so I pass my writing through ChatGPT and ask it to change the tone for me. It does a great job. ChatGPT is progressing at speeds beyond our wildest expectations, so you’ll definitely see the kind of functionality you’re talking about within your lifetime, probably within the next ten years.

This was written by an AI, wasn’t it?

¯\_(ツ)_/¯

I’m using it at work too as a devops guy, and it’s been helping a lot. If I don’t know how a certain syntax should look like, I just ask chat gpt and i get full examples that usually work. It’s amazing.

I was learning a bit of go a few days ago and then it was also so much faster to learn by asking chat gpt how to do things in that specific language.

We have absolutely nothing similar to the classical definition of intelligence. We have probability calculation based on millions of examples.

Unlike the previous bullshit they threw everywhere (3D screens, NFTs, metaverse), AI bullshit seems very likely to stay, as it is actually proving useful, if with questionable results… Or rather, questionable everything.

if it only were AI and not just llms, machine learning or just plain algorithms. but yeah let’s call everything AI from here on. NFTs could be useful if used as proof of ownership instead of expensive pictures etc

The NFT as ownership should really become the standard. Instead of having any people “authorizing” yadadada it’s done completely by machine and traceable.

No middlemen needed. Just I own x, this says I own x. I can sell you x, and you get ownership of x immediately. No “waiting 45 days to close” or “2 day transaction close” or even “title search verification.” Too many middlemen benefitting from the current system to allow NFT to replace them though. That’s the actual challenge.

Okay, someone gains access to your device and sends themselves the NFT that proves ownership of your house.

What do you do? Do you just accept that since they own the NFT, that means they own the house? Probably not. You’ll go through the legal system, because that’s still what ultimately decides the ownership. I bet you’ll be happy about middle men and “waiting 45 days to close” then.

The legal system needs to enforce ownership and recognise the blockchain as the official ownership registry.

Nfts will creep in slowly as efficiency gains are realized. They are already being used for airline tickets.

NFTs, doing what loads of services have been doing for 20 years, but slower!

Previously you’ve not been able to transfer tickets without third party help. Nor could issuers participate in the profits in the secondary market.

Not like it couldn’t have been done before without NFTs (Steam cards come to mind), my guess is that there wasn’t any “interest” or “pressure” from high up to do that.

Steam cards are a good example. Imagine if stream went bankrupt. Wouldn’t be an issue with Blockchain.

A single airline in Argentina is experimenting with it in partnership with a bullshit travel company. Hardly the proof that NFTs make any sense anywhere. And of course, the only places this story is getting traction is the blockchain hype blogs, which is red flag #2 and #3.

It’s one example of NFTs in real business. Need more?

- Walmart tracks their supply chain using blockchain.

- Starbucks loyalty scheme is NFT based

Odysey isn’t Starbuck’s loyalty program, it’s invite only unless you want to join the wait list, and it’s openly called an experiment at its launch in December 2022.

NTFs are different to blockchain, so you’re just muddying the waters for yourself with the Walmart thing. Lots of companies do chain of custody things with what you’d call blockchain. It’s been that way for over a decade now. Because it’s low transaction volume, no moronic “proof of…” nonsense, etc. Just hashes signing hashes at different points throughout the supply chain.

This isn’t the “win” the NFT hype weirdos are desperately hoping for.

Facebook started as invite only. Great for an exclusive, loyal customer.

NFTs are different to blockchain, so you’re just muddying the waters for yourself with the Walmart thing

Each item is represented by an NFT on the Walmart blockchain. The innovation in the chain of custody is that everyone is verifiably using the same database. It’s a permissioned database, so it’s proof of authority.

https://hbr.org/2022/01/how-walmart-canada-uses-blockchain-to-solve-supply-chain-challenges

Private keys sign hashes. Hashes cannot sign hashes because there is no associated private key.

As a counter to your example, this is my career’s third AI hype cycle.

As a programmer and 3D artist getting almost instant art for reference and using chat GPT to help me solve complex coding problems has sped up production significantly. Theirs even plugins that generate and texture 3D models for you now which means I can do way more by myself.

God it’s exhausting. Okay, I’ll buy a 3d television if that’s what I have to do, let’s bring that back instead. Please?

If you take out the AI part it still holds true. 2023 is full of bullshit.

The year of enshittification.

I’m bookmarking this for the next time my supervisor plugs ChatGPT.

I had a manager tell me some stuff was being scanned by AI for one of my projects.

No, you are having it scanned by a regular program to generate keyword clouds that can be used to pull it up when humans type their stupidly-worded questions into our search. It’s not fucking AI. Stop saying everything that happens on a computer that you don’t understand is fucking AI!

I’m just so over it. But at least they aren’t trying to convince us chatGPT is useful (it definitely wouldn’t be for what they would try to use it for)

What companies are you people working for?

We are being asked not to use AI.

Ain’t gotta use it to sell it or slap AI stickers on top of whatever products you’re selling

Pretty much this. Just another buzzword. Three months from now it will be something else the media doesn’t understand to spam the public with.

I’m predicting … rubs crystal ball and nipples … it’s going to be some king of cybernetic brain interface thing. Haven’t heard about those in a while. Or maybe nano bots that kill cancer or fix the paint scratches on your car.

I’m rooting for the “on-my-face” computer to come back. Fashion be damned, I still want one.

Lol my phone said king instead of kind. I’m super confident in this AI shit.

Not surprising for North Korea

Larger companies have been working fast to sandbox the models used by their employees. Once they are safe from spilling data they go all in. I’m currently on a platform team enabling generative Ai capabilities at my company.

It begs the question… what’s the boardroom random bullshit timeline?

When was it random cloud bullshit go and when was it random Blockchain bullshit go, and what other buzzwords almost guaranteed Silicon Valley tech bros tossed money in your face and at what point in time were they applicable?