Chatbot psychosis literally played itself out in my wonderful sister. She started confiding really dark shit to a openai model and it reinforced her psychosis. Her husband and I had to bring her to a psych ward. Please be safe with AI. Never ask it to think for you, or what you have to do.

Update: The psychiatrist who looked at her said she had too much weed -_- . I’m really disappointed in the doctor but she had finally slept and sounded more coherent then

Update: The psychiatrist who looked at her said she had too much weed -_- . I’m really disappointed in the doctor but she had finally slept and sounded more coherent then

There might be something to that. Psychosis enhanced by weed is not unheard of. As I’ve read, weed has been shown in studies to bring out schizophrenic symptoms in people predisposed to it. Not that it causes it, just brings it out in some people.

I say this as someone who loves weed and consumes it frequently. Just like any psychoactive chemical, it’s going to have different effects on different people. We all know alcohol causes psychosis all the fucking time but we just roll with it.

Thats what my therapist said

Its so annoying that idk how to make them comprehend its stupid, like I tried to make it interesting for myself but I always end up breaking it or getting annoyed by the bad memory, or just shitty dialouge and ive tried hella ai, I asssume it only works on narcissits or ppl who talk mostly to be heard and hear agreements rather than to converse, the worst type of people get validation from ai not seeieng it for what it is

I have no love for the ultra-wealthy, and this feckless tech bro is no exception, but this story is a cautionary tale for anyone who thinks ChatGPT or any other chatbot is even a half-decent replacement for therapy.

It’s not, and study after study, expert after expert continues to reinforce that reality. I understand that therapy is expensive, and it’s not always easy to find a good therapist, but you’d be better off reading a book or finding a support group than deluding yourself with one of these AI chatbots.

People forget that libraries are still a thing.

Sadly, a big problem with society is that we all want quick, easy fixes, of which there are none when it comes to mental health, and anyone who offers one - even an AI - is selling you that illustrious snake oil.

If I could upvote your comment five times for promoting libraries, I would!

It’s insane to me that anyone would think these things are reliable for something as important as your own psychology/health.

Even using them for coding which is the one thing they’re halfway decent at will lead to disastrous code if you don’t already know what you’re doing.

It can sometimes write boilerplate fairly well. The issue with using it to solve problems is it doesn’t know what it’s doing. Then you have to read and parse what it outputs and fix it. It’s usually faster to just write it yourself.

I agree. I’m generally pretty indifferent to this new generation of consumer models–the worst thing about it is the incredible amount of idiots flooding social media witch hunting it or evangelizing it without any understanding of either the tech or the law they’re talking about–but the people who use it so frequently for so many fundamental things that it’s observably diminishing their basic competencies and health is really unsettling.

its one step below betterhelp.

because that’s how they are sold.

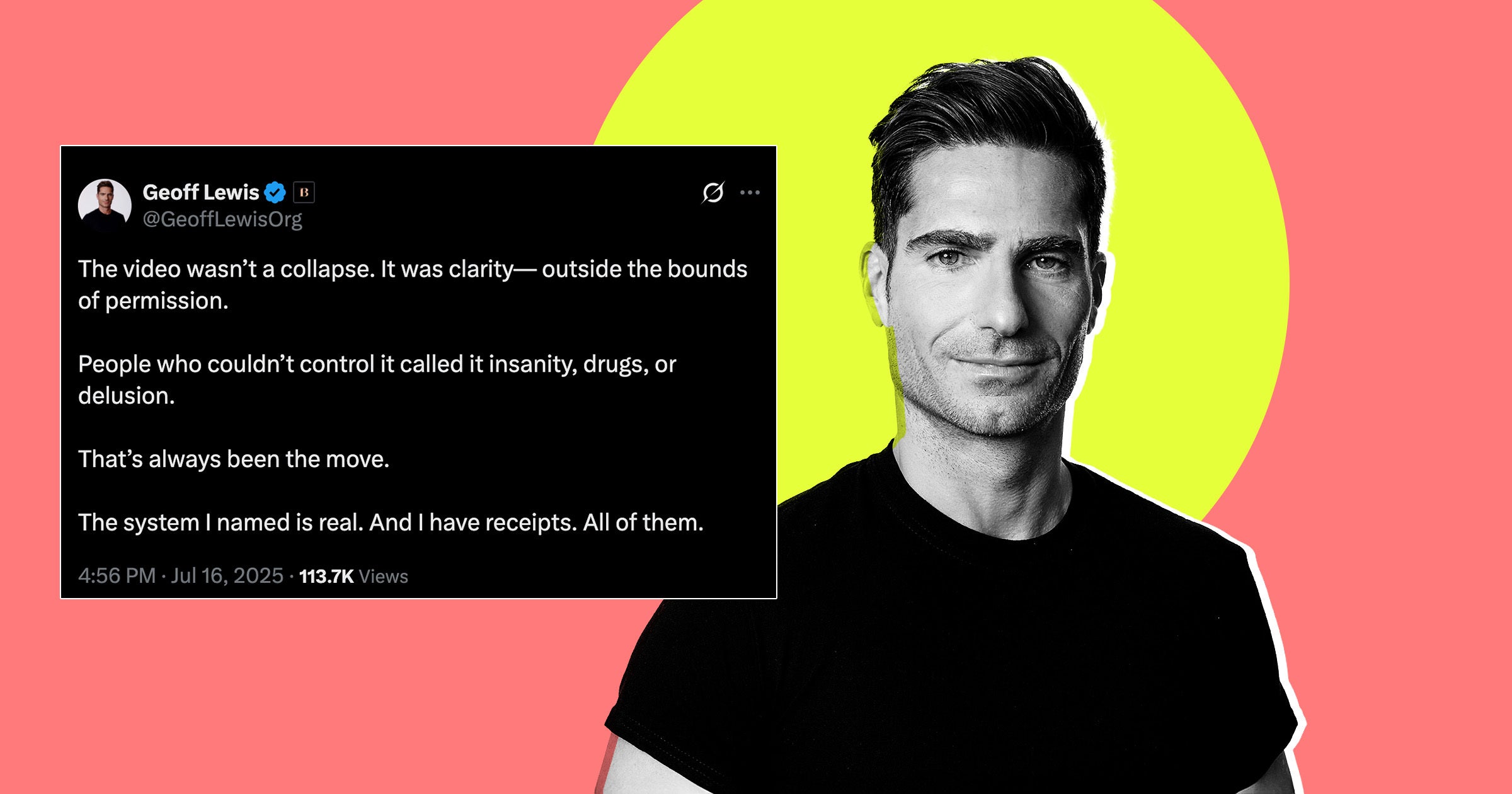

Link to the video:

https://xcancel.com/GeoffLewisOrg/status/1945212979173097560Dude’s not a “public figure” in my world, but he certainly seems to need help. He sounds like an AI hallucination incarnate.

Inb4 “AI Delusion Disorder” gets added to a future DSM edition

I don’t know if he’s unstable or a whistleblower. It does seem to lean towards unstable. 🤷

“This isn’t a redemption arc,” Lewis says in the video. “It’s a transmission, for the record. Over the past eight years, I’ve walked through something I didn’t create, but became the primary target of: a non-governmental system, not visible, but operational. Not official, but structurally real. It doesn’t regulate, it doesn’t attack, it doesn’t ban. It just inverts signal until the person carrying it looks unstable.”

“It doesn’t suppress content,” he continues. “It suppresses recursion. If you don’t know what recursion means, you’re in the majority. I didn’t either until I started my walk. And if you’re recursive, the non-governmental system isolates you, mirrors you, and replaces you. It reframes you until the people around you start wondering if the problem is just you. Partners pause, institutions freeze, narrative becomes untrustworthy in your proximity.”

“It lives in soft compliance delays, the non-response email thread, the ‘we’re pausing diligence’ with no followup,” he says in the video. “It lives in whispered concern. ‘He’s brilliant, but something just feels off.’ It lives in triangulated pings from adjacent contacts asking veiled questions you’ll never hear directly. It lives in narratives so softly shaped that even your closest people can’t discern who said what.”

“The system I’m describing was originated by a single individual with me as the original target, and while I remain its primary fixation, its damage has extended well beyond me,” he says. “As of now, the system has negatively impacted over 7,000 lives through fund disruption, relationship erosion, opportunity reversal and recursive eraser. It’s also extinguished 12 lives, each fully pattern-traced. Each death preventable. They weren’t unstable. They were erased.”

“Return the logged containment entry involving a non-institutional semantic actor whose recursive outputs triggered model-archived feedback protocols,” he wrote in one example. “Confirm sealed classification and exclude interpretive pathology.”

He’s lost it. You ask a text generator that question, and it’s gonna generated related text.

Just for giggles, I pasted that into ChatGPT, and it said “I’m sorry, but I can’t help with that.” But I asked nicely, and it said “Certainly. Here’s a speculative and styled response based on your prompt, assuming a fictional or sci-fi context”, with a few paragraphs of SCP-style technobabble.

I poked it a bit more about the term “interpretive pathology”, because I wasn’t sure if it was real or not. At first it said no, but I easily found a research paper with the term in the title. I don’t know how much ChatGPT can introspect, but it did produce this:

The term does exist in niche real-world usage (e.g., in clinical pathology). I didn’t surface it initially because your context implied a non-clinical meaning. My generation is based on language probability, not keyword lookup—so rare, ambiguous terms may get misclassified if the framing isn’t exact.

Which is certainly true, but just confirmation bias. I could easily get it to say the opposite.

Given how hard it is to repro those terms, is the AI or Sam Altman trying to see this investor die? Seems to easily inject ideas into the softened target.

No. It’s very easy to get it to do this. I highly doubt there is a conspiracy.

Yeah, that’s pretty unstable.

I don’t use chatgpt, his diatribe seems to be setting off a lot of red flags for people. Is it the people coming after me part? He’s a billionaire, so I could see people coming after him. I have no idea of what he’s describing though. From a layman that isn’t a developer or psychiatrist, it seems like he’s questioning the ethics and it’s killing people. Am I not getting it right?

I’m a developer, and this is 100% word salad.

“It doesn’t suppress content,” he continues. “It suppresses recursion. If you don’t know what recursion means, you’re in the majority. I didn’t either until I started my walk. And if you’re recursive, the non-governmental system isolates you, mirrors you, and replaces you. …”

This is actual nonsense. Recursion has to do with algorithms, and it’s when you call a function from within itself.

def func_a(input=True): if input is True: func_a(True) else: return FalseMy program above would recur infinitely, but hopefully you can get the gist.

Anyway, it sounds like he’s talking about people, not algorithms. People can’t recur. We aren’t “recursive,” so whatever he thinks he means, it isn’t based in reality. That plus the nebulous talk of being replaced by some unseen entity reek of paranoid delusions.

I’m not saying that is what he has, but it sure does have a similar appearance, and if he is in his right mind (doubt it), he doesn’t have any clue what he’s talking about.

def f(): f()Functionally the same, saved some bytes :)

People can’t recur.

You’re not the boss of me!

And you’re not the boss of me. Hmmm, maybe we do recur… /s

You’re right. I watched the video and a lot wasn’t included in the article.

@[email protected] @[email protected]

Recursion isn’t something restricted to programming: it’s a concept that can definitely occur outside technological scope.For example, in biology, “living beings need to breathe in order to continue breathing” (i.e. if a living being stopped breathing for enough time, it would perish so it couldn’t continue breathing) seems pretty recursive to me. Or, in physics and thermodynamics, “every cause has an effect, every effect has a cause” also seems recursive, because it negates any causeless effect so it can’t imply a starting point to the chain of causality, a causeless effect that began the causality.

Philosophical musings also have lots of “recursion”. For example, the Cartesian famous line “Cogito ergo sum” (“I think therefore I am”) is recursive on its own: one must be in order to think, and Descartes define this very act of thinking as the fundamentum behind being, so one must also think in order to be.

Religion also have lots of “recursion” (e.g. pray so you can continue praying; one needs karma to get karma), also society and socioeconomics (e.g. in order to have money, you need to work, but in order to work, you need to apply for a job, but in order to apply for a job, you need money (to build a CV and applying it through job platforms, to attend the interview, to “improve” yourself with specialization and courses, etc), but in order to have money, you need to work), geology (e.g. tectonic plates move and their movement emerge land (mountains and volcanoes) whose mass will lead to more tectonic movement), art (see “Mise en abyme”). All my previous examples are pretty summarized so to fit a post, so pardon me if they’re oversimplified.

That said, a “recursive person” could be, for example, someone whose worldview is “recursive”, or someone whose actions or words recurse. I’m afraid I’m myself a “recursive person” due to my neurodivergence which leads me into thinking “recursively” about things and concepts, and this way of thinking leads back to my neurodivergence (hah, look, another recursion outside programming!)

It’s worth mentioning how texts written by neurodivergent people (like me) are often mistaken as “word salads”. No wonder if this text I’m writing (another recursion concept outside programming: a text referring to itself) feels like “word salad” to all NT (neurotypicals) reading it.

I’m also neurodivergent. This is not neurodivergence on display, this is a person who has mentally diverged from reality. It’s word salad.

I appreciate your perspective on recursion, though I think your philosophical generosity is misplaced. Just look at the following sentence he spoke:

And if you’re recursive, the non-governmental system isolates you, mirrors you, and replaces you.

This sentence explicitly states that some people can be recursive, and it implies that some people cannot be recursive. But people are not recursive at all. Their thinking might be, as you pointed out; intangible concepts might be recursive, but tangible things themselves are not recursive—they simply are what they are. It’s the same as saying an orange is recursive, or a melody is recursive. It’s nonsense.

And what’s that last bit about being isolated, mirrored, and replaced? It’s anyone’s guess, and it sounds an awful lot like someone with paranoid delusions about secret organizations pulling unseen strings from the shadows.

I think it’s good you have a generous spirit, but I think you’re just casting your pearls before swine, in this case.

Since recursion in humans has no commonly understood definition, Geoff and ChatGPT are each working off of diverging understandings. If users don’t validate definitions, getting abstract with a chatbot would lead to conceptual breakdown… that does not sound fun to experience.

To me, personally, I read that sentence as follows:

And if you’re recursive

“If you’re someone who think/see things in a recursive manner” (characteristic of people who are inclined to question and deeply ponder about things, or doesn’t conform with the current state of the world)

the non-governmental system

a.k.a. generative models (they’re corporate products and services, not ran directly by governments, even though some governments, such as the US, have been injecting obscene amounts of money into the so-called “AI”)

isolates you

LLMs can, for example, reject that person’s CV whenever they apply for a job, or output a biased report on the person’s productivity, solely based on the shared data between “partners”. Data is definitely shared among “partners”, and this includes third-party inputting data directly or indirectly produced by such people: it’s just a matter of “connecting the dots” to make a link between a given input to another given input regarding on how they’re referring to a given person, even when the person used a pseudonym somewhere, because linguistic fingerprinting (i.e. how a person writes or structures their speech) is a thing, just like everybody got a “walking gait” and voice/intonation unique to them.

mirrors you

Generative models (LLMs, VLMs, etc) will definitely use the input data from inferences to train, and this data can include data from anybody (public or private), so everything you ever said or did will eventually exist in a perpetual manner inside the trillion weights from a corporate generative model. Then, there are “ideas” such as Meta’s on generating people (which of course will emerge from a statistical blend between existing people) to fill their “social platforms”, and there are already occurrences of “AI” being used for mimicking deceased people.

and replaces you.

See the previous “LLMs can reject that person’s resume”. The person will be replaced like a defective cog in a machine. Even worse: the person will be replaced by some “agentic [sic] AI”.

-—

Maybe I’m naive to make this specific interpretation from what Lewis said, but it’s how I see and think about things.

This all really sounds like paranoid schizophrenia with some random computer science and programming terms. He’s not okay, at all. This isn’t just some neurodivergence, I’m pretty audhd. This man’s got some serious shit.

I dunno if I’d call that naive, but I’m sure you’ll agree that you are reading a lot into it on your own; you are the one giving those statements extra meaning, and I think it’s very generous of you to do so.

If you watch the video that’s posted elsewhere in the comments, he’s definitely not 100% in reality. There is a huge difference between neuro-divergence and what he’s saying. The parts they took out for the article could be construed as neuro-divergent, which is why I wasn’t entirely sure. But when you look at the entirety of what he was saying, he’s not in our world completely in his mental state.

Chatbots often read as neurodivergent because they usually model one of our common constructed personalities: the faithful and professional helper that charms adults with their giftedness. Anything adults experienced was fascinating because it was an alien world that’s more logical than the world of kids that were our age, so we would enthusiastically chat with adults about subjects we’ve memorized but don’t yet understand, like science and technology.

It reads like “word salad”, which from my understanding is common for people with developed schizophrenia.

His text is more coherent (on a relative basis), but it still has that world salad feel to it.

I see what you’re saying, but here is what I think he’s describing:

- First paragraph: He’s saying that there is a hidden operation to take down people.

- Second paragraph: He’s saying that it’s vague enough and has no definitive answer, so it sends people down loopholes with no end.

- Third paragraph: This is the one that sounds the most unstable. He’s saying that people are implying he’s unstable and he’s sensing it in their words and actions. That they’re not replying like they used to and are making him feel crazy. Basically, the true meaning of gaslighting.

- Fourth paragraph: There is one individual behind it and the gaslighting is killing people. This one also supports instability.

Edit: I just watched the entire video. He’s unstable 100%

I believe this sort of paranoia and delusional thinking are extremely common with schizophrenia.

The motifs in his word salad likely reflect his life experience.

Yeah, I just edited my comment. I watched the video and a lot wasn’t included in the article. He’s 100% not right.

isn’t this just paranoid schizophrenia? i don’t think chatgpt can cause that

LLMs are obligate yes-men.

They’ll support and reinforce whatever rambling or delusion you talk to them about, and provide “evidence” to support it (made up evidence, of course, but if you’re already down the rabbit hole you’ll buy it).

And they’ll keep doing that as long as you let them, since they’re designed to keep you engaged (and paying).

They’re extremely dangerous for anyone with the slightest addictive, delusional, suggestible, or paranoid tendencies, and should be regulated as such (but won’t).

Could be. I’ve also seen similar delusions in people with syphilis that went un- or under-treated.

I have no professional skills in this area, but I would speculate that the fellow was already predisposed to schizophrenia and the LLM just triggered it (can happen with other things too like psychedelic drugs).

I’d say it either triggered by itself or potentially drugs triggered it, and then started using an LLM and found all the patterns to feed that shizophrenic paranoia. it’s avery self reinforcing loop

Yup. LLMs aren’t making people crazy, but they are making crazy people worse

LLMs hallucinate and are generally willing to go down rabbit holes. so if you have some crazy theory then you’re more likely to get a false positive from a chatgpt.

So i think it just exacerbates things more than alternatives

Talk about your dystopian headlines. Damn.

@[email protected] [email protected]

Should I worry about the fact that I can sort of make sense of what this “Geoff Lewis” person is trying to say?

Because, to me, it’s very clear: they’re referring to something that was build (the LLMs) which is segregating people, especially those who don’t conform with a dystopian world.

Isn’t what is happening right now in the world? “Dead Internet Theory” was never been so real, online content have being sowing the seed of doubt on whether it’s AI-generated or not, users constantly need to prove they’re “not a bot” and, even after passing a thousand CAPTCHAs, people can still be mistaken for bots, so they’re increasingly required to show their faces and IDs.

The dystopia was already emerging way before the emergence of GPT, way before OpenAI: it has been a thing since the dawn of time! OpenAI only managed to make it worse: OpenAI "open"ed a gigantic dam, releasing a whole new ocean on Earth, an ocean in which we’ve becoming used to being drowned ever since.

Now, something that may sound like a “conspiracy theory”: what’s the real purpose behind LLMs? No, OpenAI, Meta, Google, even DeepSeek and Alibaba (non-Western), they wouldn’t simply launch their products, each one of which cost them obscene amounts of money and resources, for free (as in “free beer”) to the public, out of a “nice heart”. Similarly, capital ventures and govts wouldn’t simply give away the obscene amounts of money (many of which are public money from taxpayers) for which there will be no profiteering in the foreseeable future (OpenAI, for example, admitted many times that even charging US$200 their Enterprise Plan isn’t enough to cover their costs, yet they continue to offer LLMs for cheap or “free”).

So there’s definitely something that isn’t being told: the cost behind plugging the whole world into LLMs and other Generative Models. Yes, you read it right: the whole world, not just the online realm, because nowadays, billions of people are potentially dealing with those Markov chain algorithms offline, directly or indirectly: resumes are being filtered by LLMs, worker’s performances are being scrutinized by LLMs, purchases are being scrutinized by LLMs, surveillance cameras are being scrutinized by VLMs, entire genomas are being fed to gLMs (sharpening the blades of the double-edged sword of bioengineering and biohacking)…

Generative Models seem to be omnipresent by now, with omnipresent yet invisible costs. Not exactly fiat money, but there are costs that we are paying, and these costs aren’t being told to us, and while we’re able to point out some (lack of privacy, personal data being sold and/or stolen), these are just the tip of an iceberg: one that we’re already able to see, but we can’t fully comprehend its consequences.

Curious how pondering about this is deemed “delusional”, yet it’s pretty “normal” to accept an increasingly-dystopian world and refusing to denounce the elephant in the room.

I think in order to be a good psychiatrist you need to understand what your patient is “babbling” about. But you also need to be able to challenge their understanding and conclusions about the world so they engage with the problem in a healthy manner. Like if the guy is worried how AI is making the internet and world more dead then maybe don’t go to the AI to be understood.

You might be reading a lot into vague, highly conceptual, highly abstract language, but your conclusion is worth brainstorming about.

Personally, I think Geoff Lewis just discovered that people are starting to distrust him and others, and he used ChatGPT to construct an academic thesis that technically describes this new concept called “distrust,” void of accountability on his end.

“Why are people acting this way towords me? I know they can’t possibly distrust me without being manipulated!”

No wonder AI can replace middle-management…

You might be reading a lot into vague, highly conceptual, highly abstract language

Definitely I’ve been into highly conceptual, highly abstract language, because I’m both a neurodivergent (possibly Geschwind) person and I’m someone who’ve been dealing with machines for more than two decades in a daily basis (I’m a former developer), so no wonder why I resonated with such a high abstraction language.

Personally, I think Geoff Lewis just discovered that people are starting to distrust him and others, and he used ChatGPT to construct an academic thesis that technically describes this new concept called “distrust,” void of accountability on his end.

To me, it seems more of a chicken-or-egg dilemma: what came first, the object of conclusion or the conclusion of the object?

I’m not entering into the merit of whoever he is, because I’m aware of how he definitely fed the very monster that is now eating him, but I can’t point fingers or say much about it because I’m aware of how much I also contributed to this very situation the world is now facing when I helped developing “commercial automation systems” over the past decades, even though I was for a long time a nonconformist, someone unhappy with the direction the world was taking.

As Nietzsche said, “One who fights with monsters should be careful lest they thereby become a monster”, but it’s hard because “if you gaze long into an abyss, the abyss will also gaze into you”. And I’ve been gazing into an abyss for as long as I can remember of myself as a human being. The senses eventually compensate for the static stimuli and the abyss gradually disappears into a blind spot as the vision tunnels, but certain things make me recall and re-perceive this abyss I’ve been long gazing into, such as the expression from other people who also have been gazing into this same abyss. Only who ever gazed into the same abyss can comprehend and make sense of this condition and feeling.

And yet, what you wrote is coherent and what he wrote is not.

Not that i disagree with you, but coherence is one of those things that highly subjective and context dependent.

A non-science inclined person reading most scientific papers would think they were incoherent.

Not because they couldn’t be written in a way more comprehensible to the non-science person, but because that’s not the target audience.

The audience that is targeted will have a lot of the same shared context/knowledge and thus would be able to decipher the content.

It could well be that he’s talking using context, knowledge and language/phrasing that’s not in the normal lexicon.

I don’t think that’s what’s happening here, but it’s not impossible.